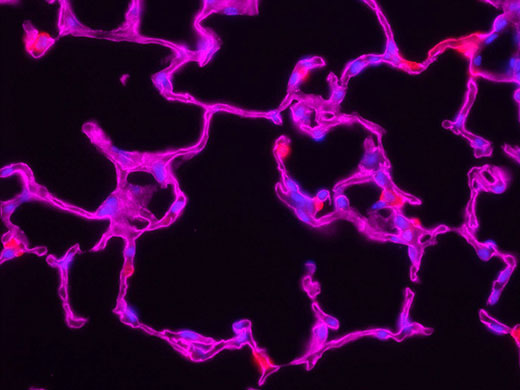

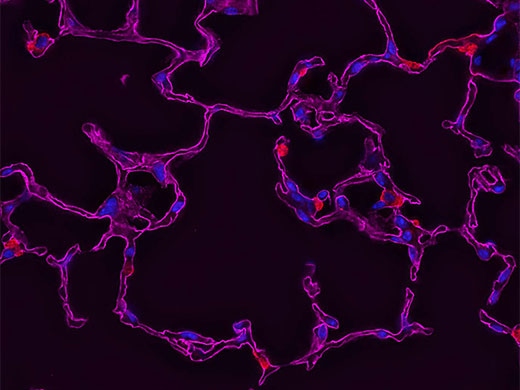

デコンボリューション法を用いた画像処理

デコンボリューション法は、計算集約型の画像処理技術であり、光学顕微鏡を使用して撮影した画像のコントラストとシャープネスを向上させるために用いられます。光学顕微鏡には回折限界があり、個々の構造が、それぞれから分散する光の波長の半分超でない限り、その構造を分解することができません。この回折限界未満の各点光源は、顕微鏡により、いわゆる点像分布関数(PSF)に一致して焦点ぼけとなります。伝統的な広視野蛍光顕微鏡法では、焦点面の上または下からの焦点の合っていない光が、撮影した画像の余計なぼけの原因となります。デコンボリューション法は、光学系の点像分布関数を用いてこの劣化を除去あるいは逆転し、より小さな点光源の集合によって得られる理想的な画像を再構築します。

光学顕微鏡の点像分布関数は、顕微鏡と標本両方の光学的特性に基づいて変化するため、完成されたシステムの正確な点像分布関数を実験的に決定することが困難になります。そのため、点像分布関数を決定し、デコンボリューション法を用いて理想的な画像の最良の再構築を行うため、数学的アルゴリズムが開発されました。3次元でないものも含め、蛍光顕微鏡で撮影したほとんど全ての画像に対してデコンボリューションが可能です。

これらのアルゴリズムは、市販ソフトウェアによって、費用対効果が高くユーザーが使いやすいパッケージとして提供されています。各デコンボリューションアルゴリズムは、コンボリューション操作の点像分布とノイズ関数の決定方法に違いがあります。基本的なイメージングの式は次のようになります。

g(x) = f(x) * h(x) + n(x)

X:空間座標

g(x):観察画像

f(x):対象物

h(x):点像分布関数

n(x):ノイズ関数

*:コンボリューション

ボケ修正アルゴリズム

ボケ修正アルゴリズムを、3次元画像スタックの各2次元平面の操作に適用します。最近傍の一般的なボケ修正技術は、近傍面のボケ修正により各z面について操作し(z+1およびz-1、デジタルぼけ修正フィルターを使用)、続いてz面からボケた面を減算します。多近傍技術は、この考え方をユーザーが選択し得る面の数に拡大します。3次元スタックは、スタックの各面にアルゴリズムを適用することによって処理します。

このクラスのボケ修正アルゴリズムは、少数の画像面に対して比較的単純な計算を用いるため、計算効率が良くなります。しかし、これらのアプローチにはいくつかの欠点もあります。例えば、点像分布関数が近傍のz面で互いに重なる構造は、属していない面に局在することがあり、この場合には物体の見かけの位置が変わります。この問題は、1枚の2次元画像のボケを修正する際には特に重大です。2次元画像は、回折環や焦点の合わない構造からの光を含むことがよくあり、それらが正しい焦点面にあったかのように鮮明化される可能性があるためです。

逆フィルタリングアルゴリズム

逆フィルタリングは、画像をフーリエ変換した後、点像分布関数のフーリエ変換により分割することで機能します。フーリエ空間での分割は実空間でのデコンボリューションと同一であり、逆フィルタリングは、画像における畳み込みを反転するための最も簡便な方法となります。計算は高速であり、2次元のぼけ修正方法と同等です。ただし、この手法の有用性は、ノイズ増幅によって制限されます。フーリエ空間での分割では、フーリエ変換における小さなノイズの変動が分割によって増幅されます。その結果、ノイズの増加に対する代償としてボケの除去能力が低下します。この技術は、リンギングとして知られるアーティファクトも誘発する可能性があります。

余計なノイズやリンギングは、画像を生み出す物体の構造に関する仮説を立てることによって低減することができます。例えば、物体が比較的滑らかであると仮定すると、ザラザラしたノイズの解法は除外することができます。規則化を逆フィルタリングでの1ステップに適用することができ、あるいは反復的に適用することも可能です。その結果、画像からフーリエ振幅が除去され、滑らかな外観となります。画像内で除去された「粗度」の多くは、解像限界を超えてフーリエ振幅内に存在するため、このプロセスでは、顕微鏡で記録された構造が損なわれることはありません。ただし、細部が損なわれる可能性があるため、逆フィルタリングのソフトウェアの実行では、通常、ユーザーが平滑化とノイズ増幅の背反をコントロールできるよう調節するパラメーターが含まれます。多くの画像処理ソフトウェアのプログラムでは、これらのアルゴリズムに、ウィーナーデコンボリューション法、正則化最小二乗法、線形最小二乗法、Tikhonov-Miller正則法などの様々な名称が付けられています。

強制反復アルゴリズム

典型的な強制反復アルゴリズムは、光子を正しい位置に復元するための追加のアルゴリズムを適用することにより、逆フィルタリングの性能を向上させます。これらの手法は、過去のサイクルの結果に基づいて連続サイクルで実行されるため、期間が反復されます。まず物体の予測を行い、点像分布関数によって畳み込みを行います。その結果得られた「ボケ予測」を原画像と比較し、ボケ予測が原画像とどの程度類似しているかを表すエラー基準を計算します。エラー基準に含まれる情報を用いて、新たな反復を行うことで点像分布関数によって新たな予測の畳み込みを行い、新たなエラー基準を計算します。最良の予測は、エラー基準が最小化されたものです。アルゴリズムが進むに従い、エラー基準がまだ最小化されていないとソフトウェアが判断した各時点で、新たな予測が不明瞭となり、エラー基準が再計算されます。エラー基準が最小化されるか、または規定した閾値に達するまでサイクルが繰り返されます。最終的に復元された画像が、最後の反復での物体予測となります。

この強制反復アルゴリズムは良好な結果を提示しますが、全ての撮影設定に適しているわけではありません。長時間の計算が求められ、コンピューターに高性能なプロセッサーが必要となります。この問題は、速度を大きく向上させるGPUベースの処理などの最新技術によって克服することが可能です。このアルゴリズムの利点を最大限に活用するためには3次元画像が最適ですが、効果は制限されるものの、2次元画像での使用も可能です。

共焦点、多光子、超解像

デコンボリューション法は、共焦点顕微鏡の使用に代わる技術として推奨される場合があります。1しかしデコンボリューション技術は共焦点顕微鏡のピンホール口径を用いて撮影した画像にも適用することができるため、これは厳密には正しいと言えません。実際、共焦点、多光子、または超解像光学顕微鏡で撮影された画像の復元も可能です。

共焦点または超解像顕微鏡法による光学画像の向上とデコンボリューション技術との組み合わせは、それらの技術が単独で通常達成できる水準以上にシャープネスを向上させます。しかし、これらの特別仕様の顕微鏡から得られる画像の畳み込みの最大の利点は、最終的な画像におけるノイズの低減です。これは、生細胞の超解像または共焦点イメージングのような低露光アプリケーションでは特に有用です。多光子画像のデコンボリューションも、ノイズを除去しコントラストを向上させるために効果的に利用されてきました。どのような場合であっても、特に共焦点のピンホール口径が調節可能な場合においては、適切な点像分布関数を適用するための注意が必要です。

*1.Shaw, Peter J., and David J. Rawlins.「The point-spread function of a confocal microscope: its measurement and use in deconvolution of 3-D data.(共焦点顕微鏡の点像分布関数:3Dデータのデコンボリューションにおける測定と使用)」 Journal of Microscopy.163、第2巻(1991年):151~165ページ。

|  |

デコンボリューション法の実践

ソフトウェアがデコンボリューションアルゴリズムをどのように実行するかによって、処理速度および画質は劇的に影響を受けます。アルゴリズムは、安定した予測を出すために、反復回数を減らして収束を加速させるように最適化することができます。例えば、最適化されていないJansson-Van Cittertアルゴリズムは、最適な予測に収束するために通常50~100回の反復が必要です。ノイズを抑えるために原画像をプレフィルタリングし、最初の反復2回での追加エラー基準で補正することによって、アルゴリズムはわずか5~10回の反復で収束します。

経験的点像分布関数を使用する場合、ノイズを最小限に抑えた高品質な点像分布関数を使用することが極めて重要です。このため、市販ソフトウェアパッケージの多くは、点像分布関数のフーリエ変換を平均化することによりノイズを低減し、放射相称を強化する前処理ルーチンを含んでいます。また多くのソフトウェアパッケージは、点像分布関数の軸対称を強化し、軸上収差をないものとします。これらのステップは、経験的点像分布関数のノイズと収差を低減し、復元画質に大きな差をもたらします。

前処理は、背景差分やフラットフィールド補正などのルーチンを使用して原画像に適用することもできます。これらの操作により、シグナル/ノイズ比が向上し、最終画像にとって有害なアーティファクトを除去することができます。

一般に、データの表示が忠実であればあるほど、画像のデコンボリューションに使用するコンピューターにはより大きなメモリーと処理時間が必要となります。従来は画像をサブボリュームに分割して処理能力を確保していましたが、最新技術はこの制約を抑えて、より大規模なデータセットに拡大しました。

オリンパスのデコンボリューションソリューション

オリンパスのcellSensイメージングソフトウェアは、TruSightデコンボリューションを採用しており、一般的に用いられるデコンボリューションアルゴリズムと、オリンパスFV3000およびIXplore SpinSRシステムで撮影した画像に使用するよう設計された新技術を組み合わせて、画像処理および解析用ツールのフルポートフォリオを提供します。

Lauren Alvarenga氏、

サイエンティフィックソリューションズグループ

Olympus Corporation of the Americas

このページはお住まいの地域ではご覧いただくことはできません。